Debugging high CPU usage using Perf Tool and Vmcore Analysis

There are several tools and technologies available to debug deeper into high CPU utilization in a system; perf, sysrq, oprofile, vmcore, and more. In this post, I will narrate the course of debugging a CPU utilization issue using technologies like perf and vmcore.

Following sar output is from a system which faced high %system usage.

Linux 2.6.32-431.20.5.el6.x86_64 (xxxxx) 08/08/2014 _x86_64_ (8 CPU) 05:04:57 PM CPU %user %nice %system %iowait %steal %idle 05:04:58 PM all 2.90 0.00 15.01 0.38 0.00 81.72 05:04:59 PM all 2.02 0.00 10.83 0.13 0.00 87.03 05:05:00 PM all 3.27 0.00 13.98 0.76 0.00 81.99 05:05:01 PM all 9.32 0.00 16.62 0.25 0.00 73.80From ‘man sar’.

%system Percentage of CPU utilization that occurred while executing at the system level (kernel). Note that this field includes time spent servicing hardware and software interrupts.This means that the system is spending considerable time on catering to kernel code. System runs a java application which is showing high CPU usage.

perf – Performance analysis tools for Linux, is a good place to start in these kind of scenarios.

‘perf record’ command would capture system state for all cpus in perf.data file. -g would allow call graph and -p allows profiling a process.

‘perf report’ command would show the report.

Samples: 18K of event 'cpu-clock', Event count (approx.): 18445, Thread: java(3284), DSO: [kernel.kallsyms] 58.66% java [k] _spin_lock 31.82% java [k] find_inode 2.66% java [k] _spin_unlock_irqrestore 2.44% java [k] mutex_spin_on_ownerHere we can see that considerable time was spend in spinlock and find_inode code for the java application..

While investigation was going on, system crashed and dumped a vmcore. Vmcore is a memory dump of the system captured by tools like kdump.

I downloaded the debuginfo file and extracted the vmlinux to analyse the vmcore.

# wget wget https://debuginfo.centos.org/6/x86_64/kernel-debuginfo-2.6.32-431.20.5.el6.x86_64.rpm # rpm2cpio kernel-debuginfo-2.6.32-431.20.5.el6.x86_64.rpm |cpio -idv ./usr/lib/debug/lib/modules/2.6.32-431.20.5.el6.x86_64/vmlinux

Then ran following command.

# crash ./usr/lib/debug/lib/modules/2.6.32-431.20.5.el6.x86_64/vmlinux /var/crash/127.0.0.1-2014-08-07-17\:56\:19/vmcoreKERNEL: ./usr/lib/debug/lib/modules/2.6.32-431.20.5.el6.x86_64/vmlinux DUMPFILE: /var/crash/127.0.0.1-2014-08-07-17:56:19/vmcore [PARTIAL DUMP] CPUS: 8 DATE: Thu Aug 7 17:56:17 2014 UPTIME: 1 days, 13:08:01 LOAD AVERAGE: 91.11, 91.54, 98.02 TASKS: 1417 NODENAME: xxxxx RELEASE: 2.6.32-431.20.5.el6.x86_64 VERSION: #1 SMP Fri Jul 25 08:34:44 UTC 2014 MACHINE: x86_64 (2000 Mhz) MEMORY: 12 GB PANIC: "Oops: 0010 [#1] SMP " (check log for details) PID: 11233 COMMAND: "java" TASK: ffff88019706b540 [THREAD_INFO: ffff880037a90000] CPU: 6 STATE: TASK_RUNNING (PANIC)</div> From the vmcore I see that dtracedrv module was loaded and unloaded (possibly for running dtrace), this resulted in several warnings (first warning from ftrace is expected) and then kernel panicked as memory got corrupted. Instruction pointer is corrupted, which points to memory corruption. Looks like Panic was triggered by dtrace module. <div style="padding: 12px;background-color: #cccccc;line-height: 1.4">/tmp/dtrace/linux-master/build-2.6.32-431.20.5.el6.x86_64/driver/dtrace.c:dtrace_ioctl:16858: assertion failure buf->dtb_xamot != cached Pid: 8442, comm: dtrace Tainted: P W --------------- 2.6.32-431.20.5.el6.x86_64 #1 Pid: 3481, comm: java Tainted: P W --------------- 2.6.32-431.20.5.el6.x86_64 #1 Call Trace: [] ? dump_cpu_stack+0x3d/0x50 [dtracedrv] [] ? generic_smp_call_function_interrupt+0x90/0x1b0 [] ? smp_call_function_interrupt+0x27/0x40 [] ? call_function_interrupt+0x13/0x20 [] ? _spin_lock+0x1e/0x30 [] ? __mark_inode_dirty+0x6c/0x160 [] ? __set_page_dirty_nobuffers+0xdd/0x160 [] ? nfs_mark_request_dirty+0x1a/0x40 [nfs] [] ? nfs_updatepage+0x3d2/0x560 [nfs] [] ? nfs_write_end+0x152/0x2b0 [nfs] [] ? iov_iter_copy_from_user_atomic+0x92/0x130 [] ? generic_file_buffered_write+0x18a/0x2e0 [] ? nfs_refresh_inode_locked+0x3e1/0xbd0 [nfs] [] ? __generic_file_aio_write+0x260/0x490 [] ? __put_nfs_open_context+0x58/0x110 [nfs] [] ? dtrace_vcanload+0x20/0x1a0 [dtracedrv] [..] BUG: unable to handle kernel paging request at ffffc90014fb415e IP: [] 0xffffc90014fb415e PGD 33c2b5067 PUD 33c2b6067 PMD 3e688067 PTE 0 Oops: 0010 [#1] SMP last sysfs file: /sys/devices/system/node/node0/meminfo CPU 6 Modules linked in: cpufreq_stats freq_table nfs fscache nfsd lockd nfs_acl auth_rpcgss sunrpc exportfs ipv6 ppdev parport_pc parport microcode vmware_balloon sg vmxnet3 i2c_piix4 i2c_core shpchp ext4 jbd2 mbcache sd_mod crc_t10dif vmw_pvscsi pata_acpi ata_generic ata_piix dm_mirror dm_region_hash dm_log dm_mod [last unloaded: dtracedrv]Pid: 11233, comm: java Tainted: P W --------------- 2.6.32-431.20.5.el6.x86_64 #1 VMware, Inc. VMware Virtual Platform/440BX Desktop Reference Platform RIP: 0010:[] [] 0xffffc90014fb415e RSP: 0018:ffff880037a91f70 EFLAGS: 00010246 RAX: 0000000000000001 RBX: 0000000000000219 RCX: ffff880037a91d40 RDX: 0000000000000001 RSI: 0000000000000001 RDI: 0000000000000000 RBP: 00007fba9a67f4c0 R08: 0000000000000000 R09: 0000000000000001 R10: 0000000000000000 R11: 00000000000003ff R12: 000000000001d4c0 R13: 0000000000000219 R14: 00007fb96feb06e0 R15: 00007fb96feb06d8 FS: 00007fb96fec1700(0000) GS:ffff880028380000(0000) knlGS:0000000000000000 CS: 0010 DS: 0000 ES: 0000 CR0: 000000008005003b CR2: ffffc90014fb415e CR3: 000000031e49e000 CR4: 00000000000407e0 DR0: 0000000000000000 DR1: 0000000000000000 DR2: 0000000000000000 DR3: 0000000000000000 DR6: 00000000ffff0ff0 DR7: 0000000000000400 Process java (pid: 11233, threadinfo ffff880037a90000, task ffff88019706b540) Stack: 0000000000000000 0000000000002be1 ffffffff8100b072 0000000000000293 000000000000ebe6 0000000000002be1 0000000000000000 0000000000000007 00000030692df333 000000000001d4c0 0000000000000001 00007fb96feb06d8 Call Trace: [] ? system_call_fastpath+0x16/0x1b Code: Bad RIP value. RIP [] 0xffffc90014fb415e RSP CR2: ffffc90014fb415e

This allowed me to have have a look at the CPU usage issue happening in the system. Other way to capture a vmcore is to manually panic the system using sysrq + c.

None of the runnable and uninterruptable_sleep processes were running for long time..

Looking at the oldest D state process..

crash) bt 4776 PID: 4776 TASK: ffff88027f3daaa0 CPU: 6 COMMAND: "java" #0 [ffff88027f3dfd88] schedule at ffffffff815287f0 #1 [ffff88027f3dfe50] __mutex_lock_killable_slowpath at ffffffff8152a0ee #2 [ffff88027f3dfec0] mutex_lock_killable at ffffffff8152a1f8 #3 [ffff88027f3dfee0] vfs_readdir at ffffffff8119f834 #4 [ffff88027f3dff30] sys_getdents at ffffffff8119f9f9 #5 [ffff88027f3dff80] system_call_fastpath at ffffffff8100b072 RIP: 00000030692a90e5 RSP: 00007fa0586c51e0 RFLAGS: 00000206 RAX: 000000000000004e RBX: ffffffff8100b072 RCX: 00007fa0cd2cf000 RDX: 0000000000008000 RSI: 00007fa0bc0de9a8 RDI: 00000000000001f6 RBP: 00007fa0bc004cd0 R8: 00007fa0bc0de9a8 R9: 00007fa0cd2fce58 R10: 00007fa0cd2fcaa8 R11: 0000000000000246 R12: 00007fa0bc004cd0 R13: 00007fa0586c5460 R14: 00007fa0cd2cf1c8 R15: 00007fa0bc0de980 ORIG_RAX: 000000000000004e CS: 0033 SS: 002b

Looking at its stack..

crash) bt -f 4776 PID: 4776 TASK: ffff88027f3daaa0 CPU: 6 COMMAND: "java" [..] #2 [ffff88027f3dfec0] mutex_lock_killable at ffffffff8152a1f8 ffff88027f3dfec8: ffff88027f3dfed8 ffff8801401e1600 ffff88027f3dfed8: ffff88027f3dff28 ffffffff8119f834 #3 [ffff88027f3dfee0] vfs_readdir at ffffffff8119f834 ffff88027f3dfee8: ffff88027f3dff08 ffffffff81196826 ffff88027f3dfef8: 00000000000001f6 00007fa0bc0de9a8 ffff88027f3dff08: ffff8801401e1600 0000000000008000 ffff88027f3dff18: 00007fa0bc004cd0 ffffffffffffffa8 ffff88027f3dff28: ffff88027f3dff78 ffffffff8119f9f9 #4 [ffff88027f3dff30] sys_getdents at ffffffff8119f9f9 ffff88027f3dff38: 00007fa0bc0de9a8 0000000000000000 ffff88027f3dff48: 0000000000008000 0000000000000000 ffff88027f3dff58: 00007fa0bc0de980 00007fa0cd2cf1c8 ffff88027f3dff68: 00007fa0586c5460 00007fa0bc004cd0 ffff88027f3dff78: 00007fa0bc004cd0 ffffffff8100b072

crash) vfs_readdir

vfs_readdir = $4 =

{int (struct file *, filldir_t, void *)} 0xffffffff8119f7b0

> struct file 0xffff8801401e1600

struct file {

f_u = {

fu_list = {

next = 0xffff88033213fce8,

prev = 0xffff88031823d740

},

fu_rcuhead = {

next = 0xffff88033213fce8,

func = 0xffff88031823d740

}

},

f_path = {

mnt = 0xffff880332368080,

dentry = 0xffff8802e2aaae00

},

[..]

) mount|grep ffff880332368080 ffff880332368080 ffff88033213fc00 nfs nanas1a.m-qube.com:/vol/test /scratch/test/test.deploy/test/test-internal

The process was waiting while reading from above nfs mount.

Following process seems to the culprit.

crash) bt 9104 PID: 9104 TASK: ffff8803323c8ae0 CPU: 0 COMMAND: "java" #0 [ffff880028207e90] crash_nmi_callback at ffffffff8102fee6 #1 [ffff880028207ea0] notifier_call_chain at ffffffff8152e435 #2 [ffff880028207ee0] atomic_notifier_call_chain at ffffffff8152e49a #3 [ffff880028207ef0] notify_die at ffffffff810a11ce #4 [ffff880028207f20] do_nmi at ffffffff8152c0fb #5 [ffff880028207f50] nmi at ffffffff8152b9c0 [exception RIP: _spin_lock+30] RIP: ffffffff8152b22e RSP: ffff88001d209b88 RFLAGS: 00000206 RAX: 0000000000000004 RBX: ffff88005823dd90 RCX: ffff88005823dd78 RDX: 0000000000000000 RSI: ffffffff81fd0820 RDI: ffffffff81fd0820 RBP: ffff88001d209b88 R8: ffff88017b9cfa90 R9: dead000000200200 R10: 0000000000000000 R11: 0000000000000000 R12: ffff88005823dd48 R13: ffff88001d209c68 R14: ffff8803374ba4f8 R15: 0000000000000000 ORIG_RAX: ffffffffffffffff CS: 0010 SS: 0018 --- --- #6 [ffff88001d209b88] _spin_lock at ffffffff8152b22e #7 [ffff88001d209b90] _atomic_dec_and_lock at ffffffff81283095 #8 [ffff88001d209bc0] iput at ffffffff811a5aa0 #9 [ffff88001d209be0] dentry_iput at ffffffff811a26c0 #10 [ffff88001d209c00] d_kill at ffffffff811a2821 #11 [ffff88001d209c20] __shrink_dcache_sb at ffffffff811a2bb6 #12 [ffff88001d209cc0] shrink_dcache_parent at ffffffff811a2f64 #13 [ffff88001d209d30] proc_flush_task at ffffffff811f9195 #14 [ffff88001d209dd0] release_task at ffffffff81074ec8 #15 [ffff88001d209e10] wait_consider_task at ffffffff81075cc6 #16 [ffff88001d209e80] do_wait at ffffffff810760f6 #17 [ffff88001d209ee0] sys_wait4 at ffffffff810762e3 #18 [ffff88001d209f80] system_call_fastpath at ffffffff8100b072

From upstream kernel source..

**

* iput - put an inode

* @inode: inode to put

*

* Puts an inode, dropping its usage count. If the inode use count hits

* zero, the inode is then freed and may also be destroyed.

*

* Consequently, iput() can sleep.

*/

void iput(struct inode *inode)

{

if (inode) {

BUG_ON(inode->i_state & I_CLEAR);if (atomic_dec_and_lock(&inode->i_count, &inode->i_lock))

iput_final(inode);

}

}

EXPORT_SYMBOL(iput);#include

/**

* atomic_dec_and_lock - lock on reaching reference count zero

* @atomic: the atomic counter

* @lock: the spinlock in question

*

* Decrements @atomic by 1. If the result is 0, returns true and locks

* @lock. Returns false for all other cases.

*/

extern int _atomic_dec_and_lock(atomic_t *atomic, spinlock_t *lock);

#define atomic_dec_and_lock(atomic, lock) \

__cond_lock(lock, _atomic_dec_and_lock(atomic, lock))

#endif /* __LINUX_SPINLOCK_H */

Looks like the process was trying to drop dentry cache and was holding to the spinlock while dropping an inode associated with it. This resulted in other processes waiting on spinlock, resulting in high %system utilization.

When the system again showed high %sys usage I checked and found large slab cache.

[..] Slab: 4505788 kB SReclaimable: 4313672 kB SUnreclaim: 192116 kB

Checking slab in a running system using slabtop, I saw that nfs_inode_cache is the top consumer.

[root@xxxxx ~]# sar 1 10

Linux 3.10.50-1.el6.elrepo.x86_64 (prod-smsgw4.sav.mqube.us) 08/12/2014 _x86_64_ (8 CPU)

11:04:45 AM CPU %user %nice %system %iowait %steal %idle

11:04:46 AM all 1.51 0.00 13.22 0.50 0.00 84.76

11:04:47 AM all 1.25 0.00 12.55 0.13 0.00 86.07

11:04:48 AM all 1.26 0.00 8.83 0.25 0.00 89.66

11:04:49 AM all 1.63 0.00 11.93 0.63 0.00 85.80

^C

[root@xxxxx ~]# sync

[root@xxxxx ~]# sar 1 10

Linux 3.10.50-1.el6.elrepo.x86_64 (prod-smsgw4.sav.mqube.us) 08/12/2014 _x86_64_ (8 CPU)

11:05:23 AM CPU %user %nice %system %iowait %steal %idle

11:05:24 AM all 1.50 0.00 13.03 0.75 0.00 84.71

11:05:25 AM all 1.76 0.00 9.69 0.25 0.00 88.30

11:05:26 AM all 1.51 0.00 9.80 0.25 0.00 88.44

11:05:27 AM all 1.13 0.00 10.03 0.25 0.00 88.60

^C

Dropping cache using following command fixed the issue.

[root@xxxxx ~]# echo 2 > /proc/sys/vm/drop_caches

[root@xxxxx ~]# cat /proc/meminfo

[..] Slab: 67660 kB

[root@prod-smsgw4 ~]# sar 1 10

Linux 3.10.50-1.el6.elrepo.x86_64 (prod-smsgw4.sav.mqube.us) 08/12/2014 _x86_64_ (8 CPU)

11:05:58 AM CPU %user %nice %system %iowait %steal %idle

11:05:59 AM all 1.64 0.00 1.38 0.13 0.00 96.86

11:06:00 AM all 2.64 0.00 1.38 0.38 0.00 95.60

11:06:01 AM all 2.02 0.00 1.89 0.25 0.00 95.84

11:06:02 AM all 2.03 0.00 1.39 4.68 0.00 91.90

11:06:03 AM all 8.21 0.00 2.27 2.65 0.00 86.87

11:06:04 AM all 1.63 0.00 1.38 0.13 0.00 96.86

11:06:05 AM all 2.64 0.00 1.51 0.25 0.00 95.60

From kernel documentation,

Writing to this will cause the kernel to drop clean caches, dentries and

inodes from memory, causing that memory to become free.To free pagecache:

echo 1 > /proc/sys/vm/drop_caches

To free dentries and inodes:

echo 2 > /proc/sys/vm/drop_caches

To free pagecache, dentries and inodes:

echo 3 > /proc/sys/vm/drop_caches

java application was traversing through nfs and was accessing large number of files, resulting in large number of nfs_inode_cache entries, resulting in in a large dcache.

Tuning vm.vfs_cache_pressure would be a persistent solution for this.

From kernel documentation,

vfs_cache_pressure

——————Controls the tendency of the kernel to reclaim the memory which is used for

caching of directory and inode objects.At the default value of vfs_cache_pressure=100 the kernel will attempt to

reclaim dentries and inodes at a “fair” rate with respect to pagecache and

swapcache reclaim. Decreasing vfs_cache_pressure causes the kernel to prefer

to retain dentry and inode caches. When vfs_cache_pressure=0, the kernel will

never reclaim dentries and inodes due to memory pressure and this can easily

lead to out-of-memory conditions. Increasing vfs_cache_pressure beyond 100

causes the kernel to prefer to reclaim dentries and inodes.

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

CPU utilization is not a useful metric

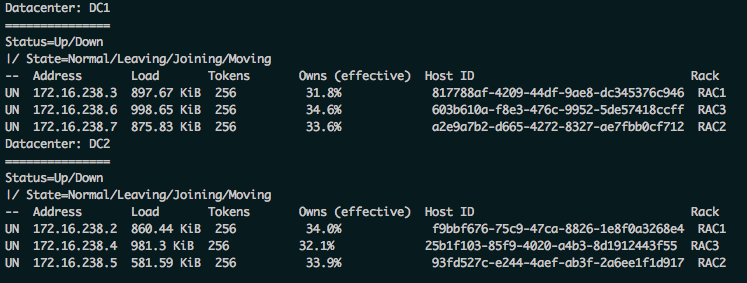

Cassandra information using nodetool

What Does oracle-rdbms-server-11gR2-preinstall Not Do?

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.